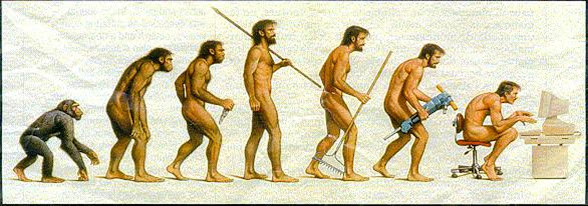

Since this is a holiday Monday I thought we would take a leisurely look at one of my favourite, and most vexing subjects ....., a lack of critical thinking amongst a good chunk of the population.

Since this is a holiday Monday I thought we would take a leisurely look at one of my favourite, and most vexing subjects ....., a lack of critical thinking amongst a good chunk of the population.(We suffer from a dearth of original ideas and thought folks, and will blindly follow anyone who feeds us simplistic solutions to complex situations or concepts.)

On the surface it applies to things like blindly following any religious dogma we grew up with ......, to an unnatural belief in various 'conspiracy theories' making the rounds in social media and general discourse.

BUT, it goes much deeper than that, bunky!

What matters is whether something feels like the truth. Does it feel like your job has been stolen? Does it feel like Hilary Clinton doesn’t care about “real” people? An American who’s watched economic inequality balloon since the 1970s may well feel these things. Such disenfranchisement, combined with a wild-west media landscape in which retweetable quotes take precedence over verifiable facts, produces the toxic intellectual environment that Daniel J. Levitin confronts in his smart, timely and massively useful primer for “critical thinking in the information age,” A Field Guide to Lies.Although this problem of "truthiness' appears to be a bit of fluff scattered over the social landscape, in actual fact it is a spreading cancer that could ultimately kill its host. (Us!)

As Levitin notes, we’ve created more information in the last five years than in all our preceding history. Meanwhile, we message, announce and declaim at an unprecedented rate. Unfortunately, the democratization of broadcasting systems brings with it a profusion of misinformation, half-truths and no-truths. Sometimes this is produced by what Levitin calls the “lying weasels” of the Internet but it’s very often the result of simple confusions on the part of everyday people – confusions that his book means to dispel.New forms of media require new forms of literacy:

Unlike previous realities, Levitin writes, the age of screens has “no central authority to prevent people from making claims that are untrue.” And so his book calls for independent analysis: Anyone who consumes easy, cheap, fast information must understand how to verify that information themselves. It should be clear by now that nobody else is doing it for you.Nobody stands outside the fog:

A professor of psychology and behavioural neuroscience at McGill University, Levitin has a mission not unlike the mission of scientists in the 17th century who sought to convince a disbelieving public that popular stories about the heavens were not always true. And his solution is the same as theirs; he proposes that we recall the basic principles of the scientific method. “The plural of anecdote is not ‘data,’” he warns.

We must look clearly, unsparingly, beyond what an argument makes us feel and learn, to spot logical fallacies; we must learn to tell the difference between inductive and deductive reasoning, and learn how to read statistics. The “Field Guide” Levitin’s created really is as instructive in these matters as its title suggests. At times it does veer toward a text-book tone but this is because the author sincerely means to instruct.

There is a basic logical fallacy, for example, called post hoc, ergo propter hoc – basically, “B happens after A, so A must be causing B.” This is the line of reasoning used by those who argue that the rise in autism can be blamed on vaccines (or WiFi, or GMOs). Between 1990 and 2010, the number of children diagnosed with autism did indeed rise six-fold. And a physician called Andrew Wakefield published a paper in the prestigious Lancet journal arguing new vaccines were to blame. (The rise in autism, by the way, is actually accounted for by a widening of the definition of “autism” and the fact that people are becoming pregnant at later ages. -Ed.)

Although his work has been exposed as fraudulent, the argument continues to be batted around online because (post hoc, ergo propter hoc) people think that correlation implies causation. Levitin helps us see the insanity of such arguments.

Case in point: If I review this book and, the next day, Toronto is not leveled by an earthquake, does that mean my reviews are keeping the city intact?

Some of these mistakes in thinking are thanks to a fear of numbers. We are easily swayed by the use of “averages,” for example. But averages can be wildly misleading. Levitin points out that “On average, humans have one testicle.” Our confused understanding of basic terms such as “mean,” “median” and “mode” allow partisan forces to shape statistics in any way that serves their purposes.

Meanwhile, charts and graphs are also far from clear-cut. One could show that the number of crimes in a neighbourhood was “sky-rocketing” even while the actual rate of crime (the number of crimes for every 1,000 people) has gone down.

Even more troubling, though, are the mistakes we make when consuming rhetorical arguments. We blindly accept the opinions of the wrong people, forgetting that “intelligence and experience tend to be domain-specific, contrary to the popular belief that intelligence is a single, unified quantity.”

Such reasoning would have us give credence to the racist views of William Shockley, for example, because he was awarded the Nobel Prize in physics.

Additionally, we fall prey to purveyors of “counterknowledge,” which is misinformation that someone has actively clothed in the garbs of fact. It “runs contrary to real knowledge” but gets spread around because it has greater social currency than truth.

http://www.theglobeandmail.com/arts/books-and-media/book-reviews/review-daniel-j-levitins-a-field-guide-to-lies-is-smart-timely-and-massively-useful/article31690289/

No comments:

Post a Comment